Was This AI Tool Tested in Children?

Research By: Lauren Erdman, PhD

Post Date: April 24, 2026 | Publish Date: April 24, 2026

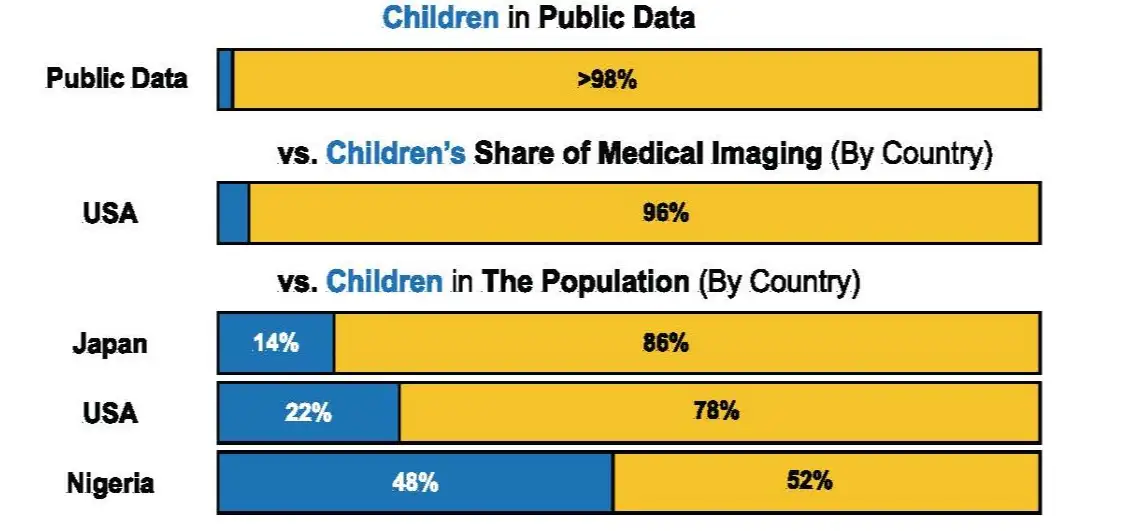

Children account for about 22% of the U.S. population but new study finds they make up less than 2% of the public medical imaging datasets used to build tools that help radiologists read scans

Artificial intelligence (AI) is becoming part of everyday healthcare, from helping radiologists read scans to flagging patients who may be getting worse. But a new study finds that too many AI tools are built using overwhelmingly adult data—meaning that these tools may perform unpredictably in children.

A multicenter research team led by experts at Cincinnati Children’s analyzed more than 200 public medical imaging datasets and published the results April 24, 2026, in the journal Nature Health. The team found that one-third of the images used to train AI tools lacked any age information; and among the rest of the images less than 2% involved children (6,026 out of 512,608).

“When children are almost invisible in the datasets used to train and test medical AI, we can’t assume the technology will work the same way for them,” says corresponding author Lauren Erdman, PhD, a researcher with the James M. Anderson Center for Health Systems Excellence at Cincinnati Children’s. “Our findings show why age needs to be treated as a core part of data reporting and model evaluation—not an optional detail.”

To show what this gap can mean in practice, the team trained AI models to detect cardiomegaly (an enlarged heart) using adult chest X-rays, then tested the models on chest X-rays from healthy children. Across four large public adult datasets, the models produced a consistent pattern of age-related bias: the youngest children—especially those under age 2—were most likely to be incorrectly flagged as having cardiomegaly.

Why including child imaging matters

- For researchers: public datasets shape what gets studied. When pediatric images and age labels are missing, it becomes harder to build, compare, and audit models for children.

- For clinicians: As AI tools move into daily work, performance that looks strong in adults may not translate to infants, toddlers or teens.

- For families of children with chronic diseases: Kids with complex conditions often undergo repeated imaging and may be among the first to encounter new technologies.

The team expected to find a disparity between adult and child data, but they were surprised by the size of the gap—which also varied by imaging modality.

The smallest gap was found in ultrasound, where an estimated 6 adult ultrasound images exist for every pediatric ultrasound image. Larger gaps were seen in X-ray (128:1), MRI (298:1), and CT (317:1).

“This disparity is a massive issue that can’t be just explained by the lower number of pediatric patients who receive imaging,” Erdman says. “The implications of this are that new models are being developed without an eye to the imaging most used in pediatrics (particularly ultrasound) and conditions which impact pediatric patients.”

What needs to happen next

The co-authors recommend these steps to better ensure that AI image interpretations are accurate and safe for children:

- Build and share more pediatric imaging datasets—by design. Collaboration across children’s hospitals and research partners can help create publicly available de-identified, AI-ready datasets that are accessible to those who are developing cutting edge machine learning methods and represent kids across ages and conditions.

- Report age clearly and consistently. Dataset documentation should include patient counts and ages, ideally at the patient level, so researchers can measure representation and evaluate models by age group.

- Test models on children before clinical use. AI developers and health systems should require evidence of pediatric validation—especially for tools likely to be used in emergency care, cardiology, oncology and other high-stakes settings.

- Protect pediatric safety as AI scales. Funders, journals and regulators can accelerate progress by setting expectations for age-stratified evaluation and transparency.

About the study

Co-authors included Alexander Towbin, MD, with the Department of Radiology at Cincinnati Children’s, and experts from the Hospital for Sick Children in Toronto, University of California Berkeley, Cleveland Clinic, and Microsoft Research in Boston.

This research was enabled in part by support provided by Compute Ontario and the Digital Research Alliance of Canada.

Don’t Miss a Post:

- Subscribe to the Research Horizons Newsletter

- Follow Cincinnati Children’s Research Foundation on Bluesky, X and LinkedIn

| Original title: | Underrepresentation of children in public medical imaging datasets |

| Published in: | Nature Health |

| Publish date: | April 24, 2026 |

Research By

I am a computational researcher aiming to develop technologies to translate and transform pediatric clinical care using machine learning.